How Do You Know That Your Model Is Predictive

How practice yous cheque the quality of your regression model in Python?

Linear regression is rooted strongly in the field of statistical learning and therefore the model must be checked for the 'goodness of fit'. This article shows you the essential steps of this chore in a Python ecosystem.

Why it is important (and why you might be missing information technology)

For all the talk and hair-splitting on the intricacies of the latest deep neural net architectures and the amazing power of xgboost on the Kaggle competitions, for a large portion of the manufacture, using information-driven analytics and car learning (ML) techniques, regression remains the top choice for their daily use.

See this KDnuggets poll result from 2018–19 (past Matthew Mayo).

The technique of regression comes in many forms — linear, nonlinear, Poisson, tree-based- simply the core idea remains well-nigh the aforementioned across the board and can be practical to a broad variety of predictive analytics problems in finance, healthcare, service industry, manufacturing, agriculture, etc.

Linear regression is the fundamental technique, which is rooted strongly in the fourth dimension-tested theory of statistical learning and inference, and powers all the regression-based algorithms used in the modern data scientific discipline pipelines.

Still, the success of a linear regression model also depends on some fundamental assumptions about the nature of the underlying data that it tries to model. Encounter this article for a simple and intuitive understanding of these assumptions,

It is, therefore, extremely important to check the quality of your linear regression model, by verifying whether these assumptions were "reasonably" satisfied (by and large visual analytics methods, which are bailiwick to interpretation, are used to check the assumptions).

The problem is that checking the quality of the model is frequently a less prioritized aspect of a data scientific discipline task menstruum where other priorities dominate — prediction, scaling, deployment, and model tuning.

Does this assertion sound besides bold? There is an easy test.

In an industry-standard Python-based data science stack, how many times take you used Pandas, NumPy, Scikit-learn, or even PostgreSQL for information conquering, wrangling, visualization, and finally constructing and tuning your ML model? Enough of times, I suppose?

Now, how many times have you used the statsmodels library to examine the model by running goodness-of-fit tests?

It is very common in a Python-based data science learning track, to go similar this,

The answer to the question "Is something missing" is yep!

Oft, in that location is plenty of discussion about regularization, bias-variance merchandise-off, or scalability (learning and complexity curves) plots. Merely, is there sufficient discussion around the following plots and lists?

- Residuals vs. predicting variables plots

- Fitted vs. residuals plot

- Histogram of the normalized residuals

- Q-Q plot of the normalized residuals

- Shapiro-Wilk normality examination on the residuals

- Cook'south distance plot of the residuals

- Variance inflation factor (VIF) of the predicting features

Information technology is clear that you take to wearable the hat of a statistician, non only a information mining professional, for this part of the machine learning pipeline.

The issue with Scikit-larn

Information technology can be safely assumed that the majority of statisticians-turned-information scientists run the goodness-of-fit tests regularly on their regression models.

But many immature data scientists and analysts depend heavily, for information-driven modeling, on ML-focused packages like Scikit-larn, which, although existence an awesome library and about a silvery bullet for auto learning and prediction tasks, practice not support easy and fast evaluation of model quality based on standard statistical tests.

Therefore, it is imperative that good information science pipeline, in addition to using an ML-focused library like Scikit-learn, include some standardized set of lawmaking to evaluate the quality of the model using statistical tests.

In this article, we evidence such a standard ready of evaluations for a multivariate linear regression problem. We will apply the statsmodels library for regression modeling and statistical tests.

A cursory overview of linear regression assumptions and the key visual tests

The assumptions

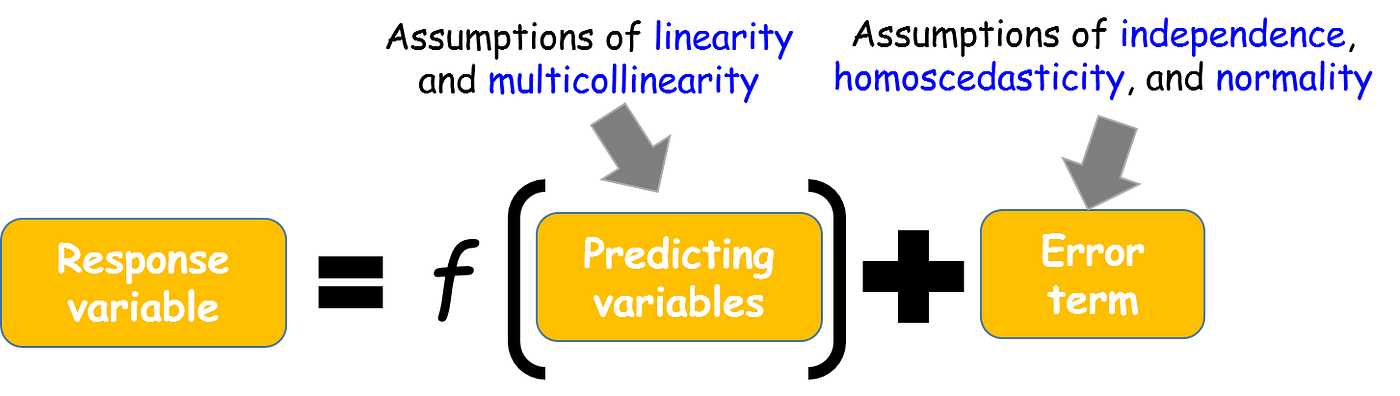

The four key assumptions that need to be tested for a linear regression model are,

- Linearity: The expected value of the dependent variable is a linear function of each independent variable, holding the others fixed (note this does non restrict yous to use a nonlinear transformation of the independent variables i.due east. y'all tin can nonetheless model f(10) = ax² + bx + c, using both x² and 10 as predicting variables.

- Independence: The errors (residuals of the fitted model) are independent of each other.

- Homoscedasticity (abiding variance): The variance of the errors is constant with respect to the predicting variables or the response.

- Normality: The errors are generated from a Normal distribution (of unknown hateful and variance, which tin can be estimated from the data). Note, this is not a necessary condition to perform linear regression unlike the meridian three above. All the same, without this assumption existence satisfied, y'all cannot summate the so-called 'confidence' or 'prediction' intervals hands equally the well-known belittling expressions respective to Gaussian distribution cannot be used.

For multiple linear regression, judging multicollinearity is likewise critical from the statistical inference point of view. This supposition assumes minimal or no linear dependence between the predicting variables.

Outliers tin can also be an upshot impacting the model quality by having a asymmetric influence on the estimated model parameters.

Here is a visual epitomize,

What plots are can be checked?

Then, error terms are pretty important.

But at that place is a piece of bad news. We can never know the true errors, no matter how much data we have. Nosotros tin only estimate and draw inference well-nigh the distribution from which the data is generated.

Therefore, the proxy of true errors is the residuals, which are merely the difference betwixt the observed values and the fitted values.

Bottom line — nosotros need to plot the residuals, check their random nature, variance, and distribution for evaluating the model quality. This is the visual analytics needed for the goodness-of-fit interpretation of a linear model.

Autonomously from this, multicollinearity can exist checked from the correlation matrix and heatmap, and outliers in the data (residual) tin be checked by so-called Cook's distance plots.

Example of regression model quality evaluation

The entire lawmaking repo for this example tin can be found in the author's Github.

Nosotros are using the concrete compressive strength prediction problem from the UCI ML portal. The concrete compressive forcefulness is a highly complex function of historic period and ingredients. Can we predict the strength from the measurement values of these parameters?

Scatterplot of variables to check for linearity

We tin simply cheque the scatterplot for visual inspection of the assumption of linearity.

Pairwise besprinkle plots and correlation heatmap for checking multicollinearity

We can use the pairplot office from the seaborn library to plot the pairwise scatterplots of all combinations.

Furthermore, if the information is loaded in Pandas, we can easily compute the correlation matrix and laissez passer that onto the special plotting function of statsmodels to visualize the correlation as a heatmap.

Model fitting using statsmodel.ols() function

The main model fitting is washed using the statsmodels.OLS method. It is an amazing linear model fit utility that feels very much similar the powerful 'lm' function in R. Best of all, information technology accepts the R-manner formula for constructing the total or partial model (i.e. involving all or some of the predicting variables).

You may question, in the age of large data, why bother about creating a partial model and non throwing all the data in? That is considering confounding or hidden bias may be present in the data which can exist addressed only by controlling for sure factors.

In any instance, the summary of the model fitted through this model already provides rich statistical data about the model such equally t-statistics and p-values respective to all the predicting variables, R-squared, and adjusted R-squared, AIC and BIC, etc.

Residuals vs. predicting variables plots

Side by side, we tin can plot the residuals versus each of the predicting variables to look for an independence assumption. If the residuals are distributed uniformly randomly around the zilch ten-axes and exercise not course specific clusters, so the supposition holds true. In this particular trouble, we detect some clusters.

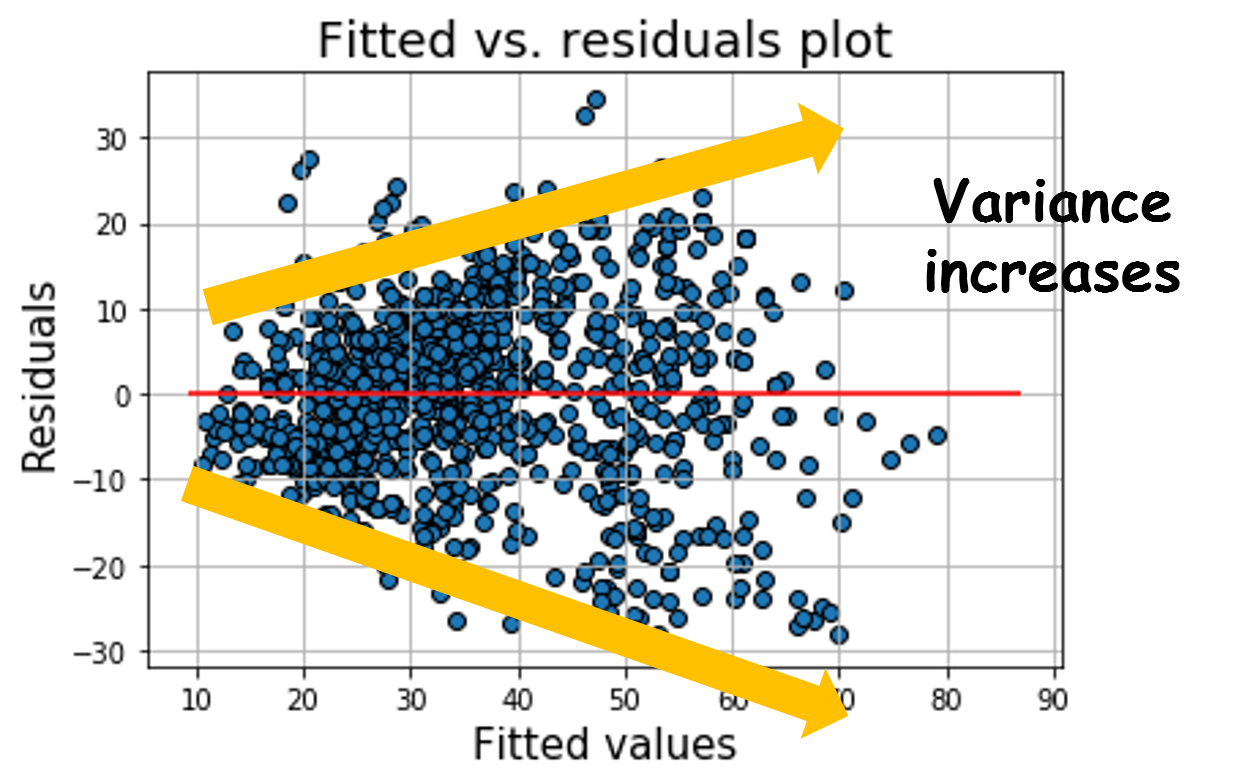

Fitted vs. residuals plot to bank check homoscedasticity

When nosotros plot the fitted response values (equally per the model) vs. the residuals, we clearly notice that the variance of the residuals increases with response variable magnitude. Therefore, the trouble does not respect homoscedasticity and some kind of variable transformation may be needed to meliorate model quality.

Histogram and Q-Q plot of normalized residuals

To cheque the assumption of the normality of the data generating process, we can just plot the histogram and the Q-Q plot of the normalized residuals.

Additionally, we can run the Shapiro-Wilk test on the residuals to check for Normality.

Outlier detection using Cook's distance plot

Cook's distance essentially measures the effect of deleting a given ascertainment. Points with a large Melt's distance demand to be closely examined for being potential outliers. We tin plot the Cook's altitude using a special outlier influence class from statsmodels.

Variance influence factors

The OLS model summary for this dataset shows a alert for multicollinearity. Merely how to check which factors are causing it?

We can compute the variance influence factors for each predicting variable. It is the ratio of variance in a model with multiple terms, divided by the variance of a model with i term lonely. Over again, we take advantage of the special outlier influence class in statsmodels.

Other residuals diagnostics

Statsmodels take a wide multifariousness of other diagnostics tests for checking model quality. You can accept a look at these pages.

- Residual diagnostics tests

- Goodness-of-fit tests

Summary and thoughts

In this article, nosotros covered how one can add essential visual analytics for model quality evaluation in linear regression — various residual plots, normality tests, and checks for multicollinearity.

One can even think of creating a simple suite of functions capable of accepting a scikit-learn-blazon estimator and generating these plots for the data scientist to quickly cheque the model quality.

Currently, although scikit-learn does not have detailed statistical tests or plotting capabilities for the model quality evaluation, Yellowbrick is a promising Python library which can add intuitive visualization capability on scikit-learn objects. Nosotros can hope that in the near future, statistical tests can exist added to scikit-learn ML estimators direct.

Loved the commodity? Get a Medium member to keep learning without limits . I'll receive a portion of your membership fee if you utilise the following link, with no actress cost to you .

Source: https://towardsdatascience.com/how-do-you-check-the-quality-of-your-regression-model-in-python-fa61759ff685

0 Response to "How Do You Know That Your Model Is Predictive"

Post a Comment